- Redirect chains

- Infinite scroll pagination

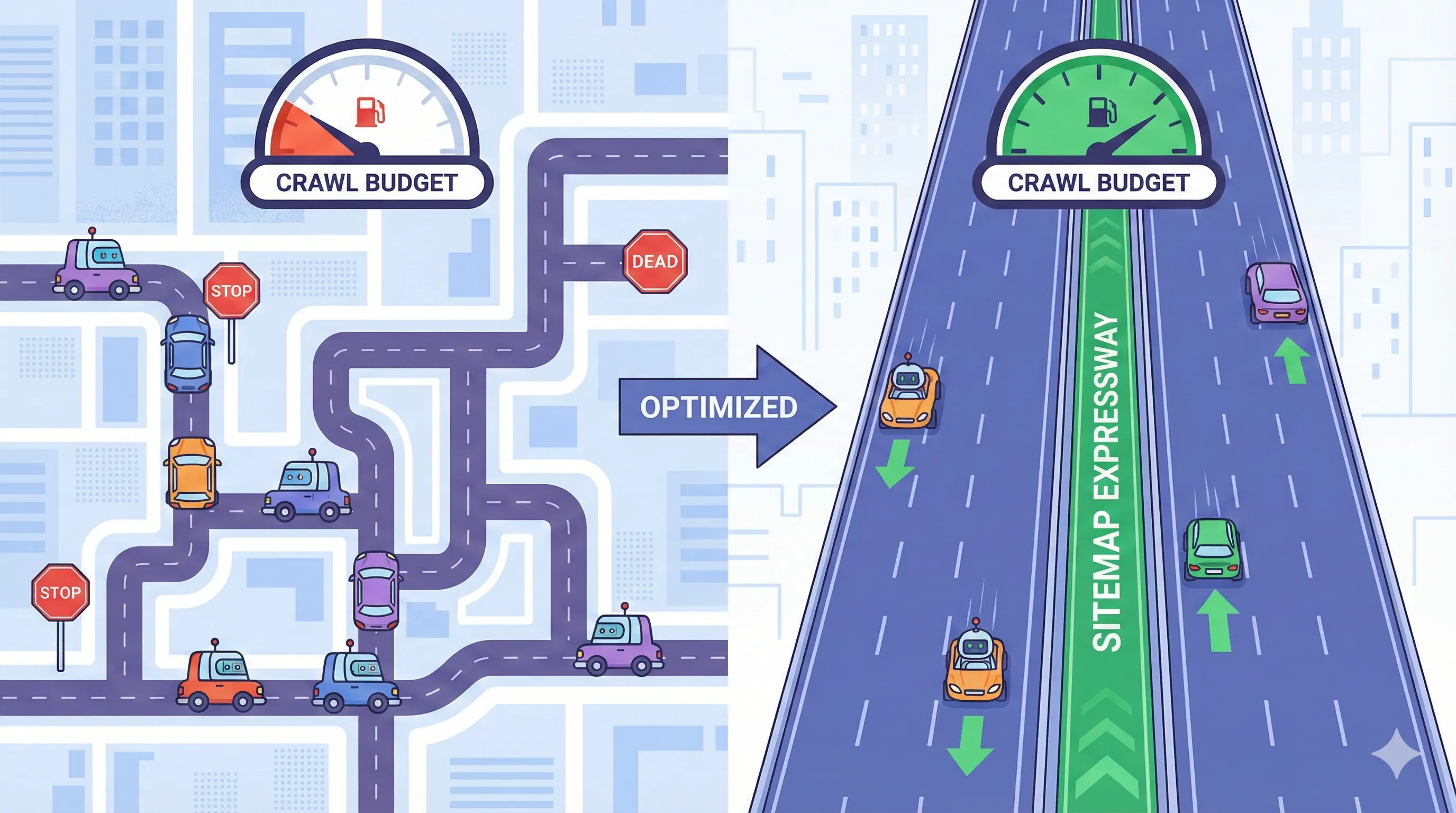

...Google might not have time left to crawl your important content.

The solution: Use sitemaps strategically to guide Googlebot toward your most valuable pages and away from the junk.

In this guide, I'll show you how crawl budget works, how to measure it, and how to optimize your sitemaps to make every crawl count. If you need a refresher on the basics, start with our sitemap fundamentals guide.

What is Crawl Budget?

Crawl budget is the number of pages Googlebot will crawl on your site within a given timeframe (usually per day).

It's determined by two factors:

- Crawl Rate Limit: How fast Google can crawl without overloading your server

- Crawl Demand: How much Google wants to crawl your site

Formula: Crawl Budget = min(Crawl Rate Limit, Crawl Demand)

Crawl Rate Limit

What it is: The maximum speed at which Googlebot can request pages without causing server issues.

Factors that affect it:

- Server response time

- Error rate (5xx errors)

- Server capacity

- Hosting quality

Google's goal: Crawl as much as possible without degrading user experience.

Crawl Demand

What it is: How interested Google is in crawling your content.

Factors that increase demand:

- Fresh, frequently updated content

- High-quality pages

- Strong backlink profile

- Good user engagement signals

- Historical crawl success

Factors that decrease demand:

- Stale content

- Duplicate pages

- Low-quality content

- Poor user signals

- Crawl errors (see our troubleshooting guide)

Do You Need to Worry About Crawl Budget?

Short answer: Only if you have a large site.

Google's official stance:

"Crawl budget is not something most publishers have to worry about. If new pages tend to be crawled the same day they're published, crawl budget is not a factor for you."

You should care about crawl budget if:

- You have 10,000+ pages

- You frequently add/update content (100+ pages/day)

- You run an e-commerce site with thousands of products

- You operate a news site with constant updates

- You have a large forum or UGC platform

- Google Search Console shows pages "Discovered - currently not indexed"

You probably don't need to worry if:

- You have under 1,000 pages

- You update content weekly or less

- New pages get indexed within 24 hours

- You're a small business or blog

How to Check Your Crawl Budget

Method 1: Google Search Console

- Go to Settings → Crawl Stats

- Look at "Total crawl requests" over time

What to look for:

- Stable or increasing: Good sign

- Decreasing: Potential problem

- Spiky: Normal for news sites

Key metrics:

- Total crawl requests per day

- Average response time

- Crawl request breakdown (by response code)

Method 2: Server Log Analysis

More accurate than Search Console:

# Count Googlebot requests per day

grep "Googlebot" /var/log/apache2/access.log | \

awk '{print $4}' | \

cut -d: -f1 | \

sort | uniq -c

What to track:

- Requests per day

- Pages crawled vs. total pages

- Crawl frequency per URL

- Response codes (200, 404, 301, etc.)

Tools for log analysis:

- Screaming Frog Log File Analyser

- Botify

- OnCrawl

Method 3: Calculate Crawl Rate

Formula:

Crawl Rate = Pages Crawled / Total Pages

Example:

- Total pages: 50,000

- Pages crawled per day: 5,000

- Crawl rate: 10% per day

- Full site crawl: Every 10 days

Good crawl rate:

- News sites: 50-100% per day

- E-commerce: 20-50% per day

- Blogs: 10-30% per day

- Static sites: 5-10% per day

How Sitemaps Affect Crawl Budget

Sitemaps don't increase your crawl budget, but they help you use it more efficiently.

1. Priority Signaling

The <priority> tag (0.0 to 1.0) suggests which pages are most important:

<url>

<loc>https://example.com/important-product</loc>

<priority>1.0</priority> ← High priority

</url>

<url>

<loc>https://example.com/old-blog-post</loc>

<priority>0.3</priority> ← Low priority

</url>

Reality check: Google mostly ignores <priority> these days. But it doesn't hurt to include it.

2. Freshness Signals

The <lastmod> tag tells Google when content changed:

<url>

<loc>https://example.com/news/breaking-story</loc>

<lastmod>2025-11-26T14:30:00+00:00</lastmod> ← Updated today

</url>

<url>

<loc>https://example.com/about</loc>

<lastmod>2023-01-15</lastmod> ← Old, stable content

</url>

Impact: Google prioritizes crawling pages with recent <lastmod> dates.

Critical: Only update <lastmod> when content actually changes. Don't set it to "now" on every sitemap generation.

3. Explicit URL Discovery

Without a sitemap, Google discovers pages by:

- Following links from other pages

- Following external backlinks

- Guessing URL patterns (risky)

With a sitemap, you explicitly say:

- "These are all my pages"

- "Don't waste time crawling junk"

- "Focus on these URLs"

4. Excluding Low-Value Pages

Don't include in your sitemap:

- Pagination pages (page=2, page=3, etc.)

- Filter/sort variations

- Search result pages

- Thank you pages

- Admin pages

- Duplicate content

Example of what NOT to include:

<!-- DON'T DO THIS -->

<url>

<loc>https://example.com/products?page=2</loc> ← Pagination

</url>

<url>

<loc>https://example.com/products?sort=price</loc> ← Filter

</url>

<url>

<loc>https://example.com/search?q=shoes</loc> ← Search results

</url>

Instead: Only include canonical product pages.

Crawl Budget Optimization Strategies

Strategy 1: Organize Sitemaps by Update Frequency

Split your sitemap by how often content changes:

sitemap_index.xml

├── sitemap-daily.xml (news, trending products)

├── sitemap-weekly.xml (blog posts)

├── sitemap-monthly.xml (product pages)

└── sitemap-static.xml (about, contact, policies)

Benefits:

- Google can prioritize fresh content

- Accurate

<lastmod>dates per sitemap - Easier to regenerate frequently-updated sections

Implementation:

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>https://example.com/sitemap-daily.xml</loc>

<lastmod>2025-11-26</lastmod> ← Updated today

</sitemap>

<sitemap>

<loc>https://example.com/sitemap-static.xml</loc>

<lastmod>2025-01-15</lastmod> ← Rarely changes

</sitemap>

</sitemapindex>

Strategy 2: Use Accurate lastmod Dates

Bad (always "now"):

# DON'T DO THIS

for url in urls:

lastmod = datetime.now().strftime("%Y-%m-%d") # Always today!

Good (actual modification date):

# DO THIS

for page in pages:

lastmod = page.updated_at.strftime("%Y-%m-%d") # Real date

Impact: Google learns to trust your <lastmod> dates and crawls updated pages faster.

Strategy 3: Remove Orphan and Dead Pages

Audit your sitemap:

Use Sitemap Explorer to: - Visualize all URLs in your sitemap - Identify suspicious or outdated URLs - Spot patterns (e.g., all URLs from a deleted section)

Then check Google Search Console → Sitemaps for 404 errors.

Remove:

- 404 pages

- 301 redirects (use final URL instead)

- 410 (Gone) pages

- noindex pages

See our 404 errors guide for detailed steps.

Strategy 4: Limit Sitemap Size

Keep individual sitemaps under:

- 40,000 URLs (not the max of 50,000)

- 40MB uncompressed (not the max of 50MB)

Why: Leaves room for growth and faster processing.

If you exceed limits:

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>https://example.com/sitemap-products-1.xml</loc>

</sitemap>

<sitemap>

<loc>https://example.com/sitemap-products-2.xml</loc>

</sitemap>

<sitemap>

<loc>https://example.com/sitemap-products-3.xml</loc>

</sitemap>

</sitemapindex>

Strategy 5: Fix Technical Issues

Crawl budget killers:

- Slow server response time (>500ms)

- 5xx errors

- Redirect chains (A → B → C → D)

- Infinite scroll without pagination

- Duplicate content

How sitemaps help:

- Only include fast-loading pages

- Exclude error-prone sections

- Use final URLs (no redirects)

- Include paginated versions explicitly

Strategy 6: Use robots.txt Strategically

Block low-value sections:

User-agent: *

Disallow: /search/

Disallow: /cart/

Disallow: /checkout/

Disallow: /admin/

Disallow: /*?sort=

Disallow: /*?filter=

Sitemap: https://example.com/sitemap.xml

Don't block:

- Pages in your sitemap

- Important product/category pages

- Blog content

- Landing pages

Strategy 7: Monitor Crawl Efficiency

Key metrics to track:

| Metric | Formula | Good Target |

|---|---|---|

| Crawl Coverage | Pages crawled / Total pages | >80% |

| Crawl Frequency | Days between crawls | <7 days for important pages |

| Crawl Waste | Crawls on low-value pages / Total crawls | <20% |

| Indexation Rate | Indexed pages / Submitted pages | >90% |

Tools:

- Google Search Console (Crawl Stats)

- Screaming Frog Log Analyser

- Botify or OnCrawl (enterprise)

Advanced: Dynamic Sitemap Prioritization

For very large sites, generate sitemaps dynamically based on page importance.

Python example:

import sqlite3

from datetime import datetime, timedelta

def calculate_priority(page):

"""Calculate priority based on multiple factors"""

score = 0.5 # Base score

# Boost for recent updates

days_since_update = (datetime.now() - page['updated_at']).days

if days_since_update < 7:

score += 0.3

elif days_since_update < 30:

score += 0.2

# Boost for traffic

if page['monthly_views'] > 10000:

score += 0.2

elif page['monthly_views'] > 1000:

score += 0.1

# Boost for conversions

if page['conversions'] > 100:

score += 0.2

elif page['conversions'] > 10:

score += 0.1

# Cap at 1.0

return min(score, 1.0)

def generate_smart_sitemap():

"""Generate sitemap with calculated priorities"""

conn = sqlite3.connect('analytics.db')

cursor = conn.cursor()

cursor.execute('''

SELECT url, updated_at, monthly_views, conversions

FROM pages

WHERE published = 1

ORDER BY updated_at DESC

''')

xml = '<?xml version="1.0" encoding="UTF-8"?>\n'

xml += '<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">\n'

for row in cursor.fetchall():

page = {

'url': row[0],

'updated_at': datetime.fromisoformat(row[1]),

'monthly_views': row[2],

'conversions': row[3],

}

priority = calculate_priority(page)

xml += ' <url>\n'

xml += f' <loc>{page["url"]}</loc>\n'

xml += f' <lastmod>{page["updated_at"].strftime("%Y-%m-%d")}</lastmod>\n'

xml += f' <priority>{priority:.1f}</priority>\n'

xml += ' </url>\n'

xml += '</urlset>'

conn.close()

return xml

Example: E-commerce Crawl Budget Optimization

Scenario: Online store with 100,000 products

Initial state:

- 100,000 URLs in sitemap

- Includes all filter/sort variations

- Includes out-of-stock products

- No organization by category

- Crawl rate: 5,000 pages/day (20 days for full crawl)

After optimization:

sitemap_index.xml

├── sitemap-new-products.xml (500 URLs, updated daily)

├── sitemap-bestsellers.xml (1,000 URLs, updated weekly)

├── sitemap-in-stock.xml (40,000 URLs, updated daily)

├── sitemap-categories.xml (500 URLs, updated monthly)

└── sitemap-content.xml (200 URLs, updated weekly)

Changes made:

- Removed out-of-stock products from sitemap

- Removed filter/sort variations

- Organized by product importance

- Added accurate

<lastmod>dates - Prioritized new arrivals and bestsellers

Expected outcomes (actual results depend on site authority and Google's assessment):

- Improved crawl efficiency

- Faster indexing of important pages

- Better allocation of crawl resources

- Potential increase in organic visibility

Note: Specific percentage improvements vary significantly based on site size, authority, technical health, and content quality. Focus on the optimization principles rather than expected percentage gains.

Next Steps

Now that you understand crawl budget optimization:

- Measure your current crawl budget - Check Search Console

- Audit your sitemap - Remove low-value pages

- Organize by update frequency - Split into multiple sitemaps

- Fix technical issues - Improve server response time

- Monitor crawl stats - Track improvements over time

- Learn about sitemap organization - Read our sitemap index guide

Key Takeaways

- Crawl budget matters for sites with 10,000+ pages

- Sitemaps don't increase budget, but help you use it efficiently

- Use accurate

<lastmod>dates - Google prioritizes fresh content - Organize sitemaps by update frequency - Daily, weekly, monthly, static

- Remove low-value pages - Pagination, filters, duplicates

- Monitor crawl stats - Track coverage and efficiency

- Fix technical issues - Fast servers, no errors, no redirect chains

Bottom line: A well-optimized sitemap ensures Google crawls your most important content first, leading to faster indexing and better organic visibility.

Ready to analyze your crawl efficiency? Visualize your sitemap structure to see which pages you're prioritizing and identify optimization opportunities.